The AI Enablement Brief · Apr 14, 2026

The Model Question Is the Wrong Question

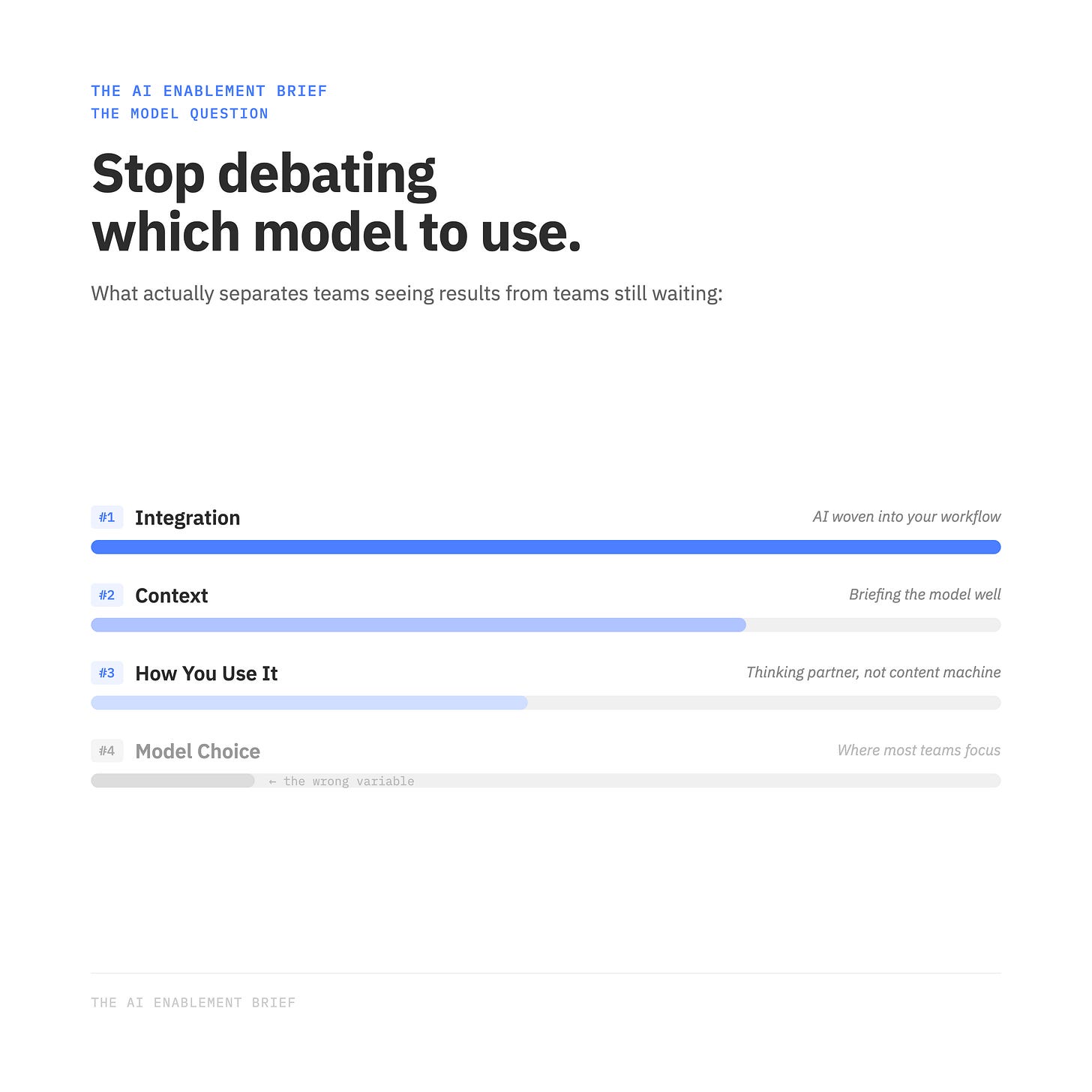

Most teams are still debating which AI to use. The ones seeing results have moved on to something else entirely.

There’s a debate happening in every marketing team, agency, and boardroom right now.

Which model should we use?

Gemini Pro or Gemini Thinking? Claude Sonnet or Opus? GPT-4o or o3? The conversation has become its own cottage industry — benchmarks, tier lists, Reddit threads, LinkedIn hot takes. And I get it. The options are genuinely overwhelming, and the stakes feel high. Pick the wrong one and maybe you’re leaving performance on the table.

But after building AI workflows at NP Digital and watching teams across the industry navigate this shift, I’ve come to a conclusion: the model debate is mostly noise.

Here’s what actually matters.

The Model Myth

The reality about today’s frontier models is that for the vast majority of marketing tasks — writing emails, drafting web content, summarizing research, building reports — Gemini, Claude, and ChatGPT are all really, really good.

Not “good enough.” Actually good.

Run the same brief through three different models and you’ll get three outputs that are far more similar than different. The gap between top models on everyday tasks is narrower than most people expect.

Model choice matters at the margins — for specialized technical tasks, extended reasoning, or cost-at-scale — but for the average marketing team, the model is not the variable that explains the performance gap between those seeing results and those still waiting to see them.

That variable is something else entirely.

The Human Content Advantage

Here’s what actually explains the gap: whether you’re using AI to create content, or to help you create content.

It’s a subtle distinction that has significant consequences.

Human-generated content consistently outperforms AI-generated content on social platforms — particularly LinkedIn and X. If you’re publishing fully AI-generated posts, you’re already taking the engagement hit, even if the content itself is technically sound. Audiences feel the difference. The platforms have too.

But this isn’t an argument against AI in content creation. It’s an argument for using it differently.

The teams winning with AI aren’t handing a brief to a model and publishing the output. They use AI as a research and thinking partner. They feed it raw notes, transcripts, competitive analysis. They use it to stress-test arguments, find angles, sharpen structure. And then they write. The AI does the thinking alongside them — it doesn’t replace the voice.

The output reads human because it is human. AI just compressed the research phase.

The Context Layer

If how you use AI matters more than which AI you use, the next question is practical: how do you actually get more out of the model you’re already using?

The answer is context.

Every LLM is working with what you give it. And most teams dramatically under-invest here. They use AI like a search engine — asking it questions from scratch — when they should be using it like a smart analyst they’re briefing.

The fix is simple: before your next piece of content, your next report, your next strategy brief, don’t start from scratch. Dump in your raw notes, a transcript from a client call, documentation from your brand guidelines, competitive intel you’ve been sitting on. Paste it in. The output quality jumps — not because the model got smarter, but because you gave it something real to work with.

Context is the first unlock most teams skip, and it costs nothing to start.

The Integration Layer

Context gets you far. Integration is where the real value compounds — and this is where I see the biggest gap between teams that are AI-aware and teams that are actually AI-enabled.

Feeding context manually into a chat window is a good start. But every time you close the tab, the context is gone. Every new conversation starts from zero. You’re re-briefing a new analyst every single time.

Integration changes this entirely.

Claude Desktop is the clearest example right now. You can connect your workspace to the tools your team actually uses — Supermetrics, BigQuery, Asana, Monday, and more. The AI stops operating in isolation and starts operating inside your workflow. It knows the context because it’s connected to the systems that hold the context. You stop briefing it and start directing it.

That’s the moment your AI stops being a chatbot and becomes a business partner.

And that’s the shift worth chasing — not the next model release, not the next benchmark. The moment you stop being AI-aware and start being AI-enabled.

Where to Focus Next

If you’re still in the middle of the model debate, here’s a more useful place to put your attention:

Start with context. Before your next brief, dump everything you know into the model first — your notes, your research, previous work samples, brand voice documentation. Don’t start from scratch. See what happens to the output.

Then look at one integration. Not a moonshot. Just one tool your team uses daily that could connect to your AI — eliminating one manual handoff somewhere in your workflow. Start there, see what it unlocks.

The model you’re already using is probably good enough. The question is whether you’re using it well.

Are you still in the model debate — or have you moved past it?